How we review.

The standards behind every CAT Outdoors review.

We test what we review.

Every product that gets a CAT Outdoors review score has been in our hands. Not a press release, not a spec sheet, not a YouTube highlight reel. The actual product, used the way our readers would use it. That means range time, real ammo, real conditions, and enough hours to form an opinion that holds up.

Some reviews are written by our team. Some are written by collaborators with backgrounds we trust: military, law enforcement, competition, cinematography, gunsmithing. They put the gear through its paces and report back. Either way, what you read came from someone who pulled the trigger, ran the rifle, or wore the kit.

We don’t repackage other sites’ reviews. We don’t run AI-generated copy. If we haven’t tested it, it doesn’t get a score.

How we source products.

We tell you exactly where every product came from. In every review, we disclose whether we bought the product at retail (and what we paid), whether the manufacturer sent it as a free sample, or whether it came to us as a test and evaluation unit we’ll return after the review.

A free sample doesn’t change our verdict. We’ve kept negative reviews on samples we were sent, and we’ve passed on writing about samples we weren’t impressed by rather than soften the assessment. Editorial control stays with us. Brands don’t get pre-publication review. They don’t approve scores. They don’t choose which products we cover.

How scoring works.

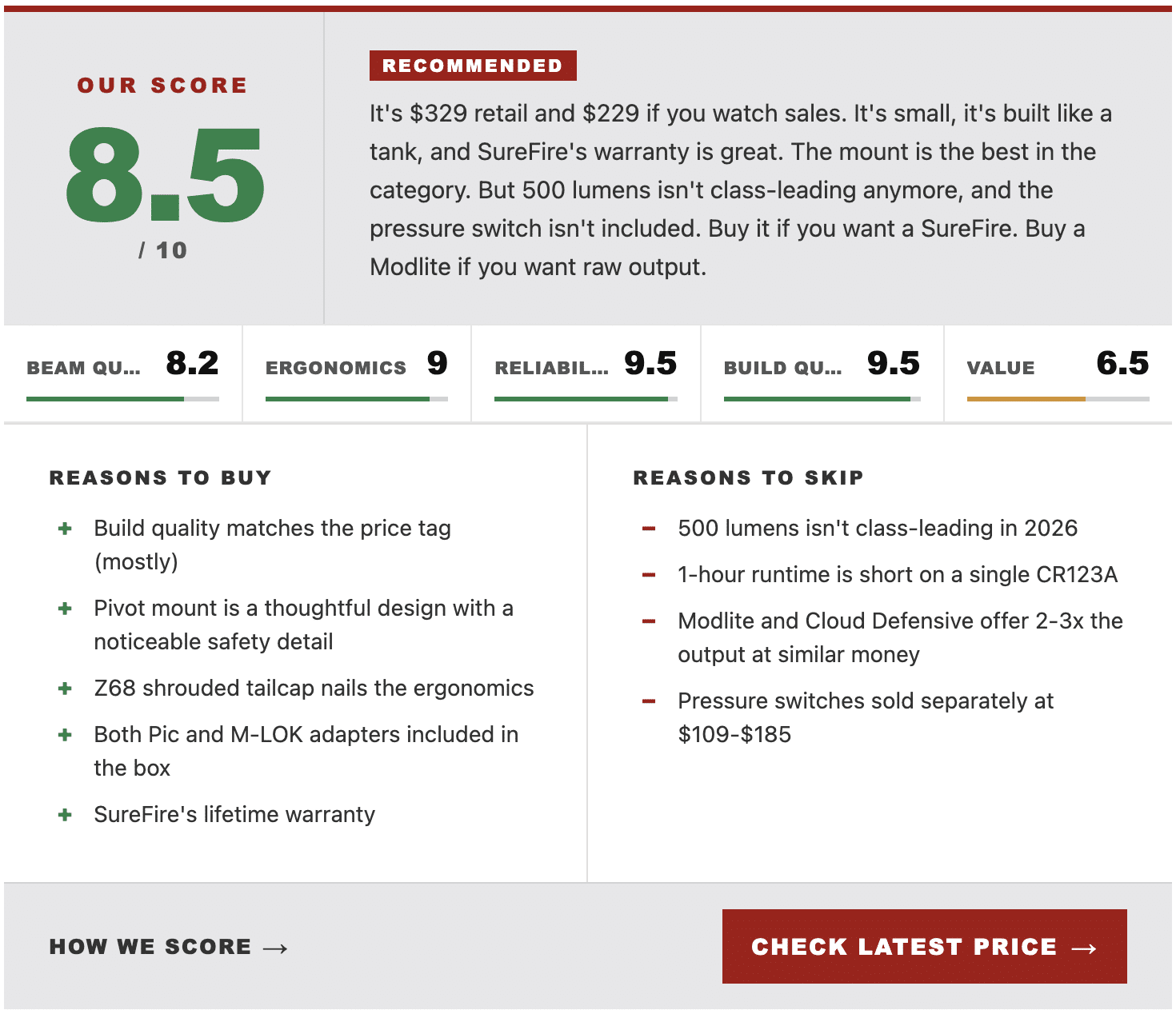

Every review gets a score out of 10, broken down into product-specific sub-categories also rated on a 10-point scale. The overall score is an average of the sub-category scores. A bipod is judged on different things than a gas mask, so the sub-categories shift to fit what actually matters for that product.

Here’s what a CAT Outdoors review score looks like:

Scoring isn’t a science. Two reviewers won’t always land on the exact same number. But the sub-categories are consistent within a product class, and a 7 means the same thing across our reviews.

Verdict tags like RECOMMENDED appear only on products that genuinely earn them. Some products don’t get a tag. We don’t hand out participation trophies.

When we recommend against a product.

Not every product is worth buying. When something falls short of what its price and marketing claim, we say so.

Our review of the CVLIFE Paracord Sling is one example. A product we tested, didn’t trust, and called out by name in our rifle slings roundup. We’d rather lose an affiliate commission than send a reader toward gear that’ll fail them.

If we change our mind on a product after publication, whether from better testing, longer-term wear, or a manufacturer revision, we update the review and note the change.

How we make money.

Affiliate marketing pays for what we do here. When you click a product link and buy something, we may earn a commission from that retailer. The price you pay doesn’t change. If you don’t click or don’t buy, we don’t earn anything.

Our affiliate relationships don’t influence our coverage. Retailers don’t choose what we review or what we score. We pick the products; we pick the verdicts; the affiliate links come after the editorial decision is already made.

Corrections and updates.

Gear changes. Manufacturers revise products. New competitors appear. We update reviews when something material has changed: a new model, a recall, a price shift that changes our value assessment, a long-term reliability issue we couldn’t have caught at launch.

When we make significant updates to a review or a roundup, we add a changelog at the bottom of the article noting what changed and when. Smaller copy edits and ongoing tweaks don’t get logged. We’d spend more time annotating than writing.

If you spot a factual error in one of our reviews, tell us. We’ll look at it and fix what needs fixing.